Only rows that are entirely zero can be emitted and the presence of any non-zero But it also increases the amount of storage for the values. This reduces the number of indices since we need one index one per row instead If however any of the values in the row are non-zero, they are storedĮntirely. If an entire row in the 3D strided Tensor is zero, it is In this example we create a 3D Hybrid COO Tensor with 2 sparse and 1 dense dimensionįrom a 3D strided Tensor. to_sparse_csr () tensor(crow_indices=tensor(, ]), col_indices=tensor(, ]), values=tensor(, ]), size=(2, 2, 2), nnz=3, layout=torch.sparse_csr)ĭense dimensions: On the other hand, some data such as Graph embeddings might beīetter viewed as sparse collections of vectors instead of scalars. Indices of non-zero elements are stored in this case. Layout to a 2D Tensor backed by the COO memory layout. In the next example we convert a 2D Tensor with default dense (strided) Given dense Tensor by providing conversion routines for each layout. We want it to be straightforward to construct a sparse Tensor from a Without being opinionated on what’s best for your particular application. We make it easy to try different sparsity layouts, and convert between them, Of efficient kernels and wider performance optimizations.

This helps us prioritize the implementation Please feel encouraged to open a GitHub issue if you analyticallyĮxpected to see a stark increase in performance but measured aĭegradation instead. You might find your execution time to increase rather than decrease. When trying sparse formats for your use case Like many other performance optimization sparse storage formats are notĪlways advantageous. As such sparse storage formats can be seen as a Especially for highĭegrees of sparsity or highly structured sparsity this can have significant We call the uncompressed values specified in contrast to unspecified,īy compressing repeat zeros sparse storage formats aim to save memoryĪnd computational resources on various CPUs and GPUs. While they differ in exact layouts, they allĬompress data through efficient representation of zero valued elements. Various sparse storage formats such as COO, CSR/CSC, LIL, etc. To provide performance optimizations for these use cases via sparse storage formats. We recognize these are important applications and aim Matrices, pruned weights or points clouds by Tensors whose elements are Now, some users might decide to represent data such as graph adjacency Processing algorithms that require fast access to elements. This leads to efficient implementations of various array Why and when to use sparsity ¶īy default PyTorch stores torch.Tensor stores elements contiguously We highly welcome feature requests, bug reports and general suggestions as GitHub issues. The PyTorch API of sparse tensors is in beta and may change in the near future. Extending torch.func with autograd.Function.CPU threading and TorchScript inference.CUDA Automatic Mixed Precision examples.For example, when using np.zeros and np.ones, the default dtype is float64. When creating an array, we can specify the dtype. For most machine learning applications we don’t need such precision and we can reduce the memory footprint two times by using float32 instead of float64. Note: In NumPy, the default float dtype is float64, which uses 64 bits (8 bytes) for each number. You can check the full list of different dtypes in the official documentation. For most machine learning applications, float32 is good enough: we typically don’t need great precision. The more bits we use, the more precise the float is. In the case of floats, we have three types: float16, float32, and float64. The more bits we use, the larger numbers we can store: Size (bits) Likewise, we have four types of int: int8, int16, int32 and int64. There are multiple variations of each dtype depending on the number of bits used for representing the value in memory.įor uint we have four types: uint8, uint16, uint32, uint64 of size 8, 16, 32 and 64 bits respectively.

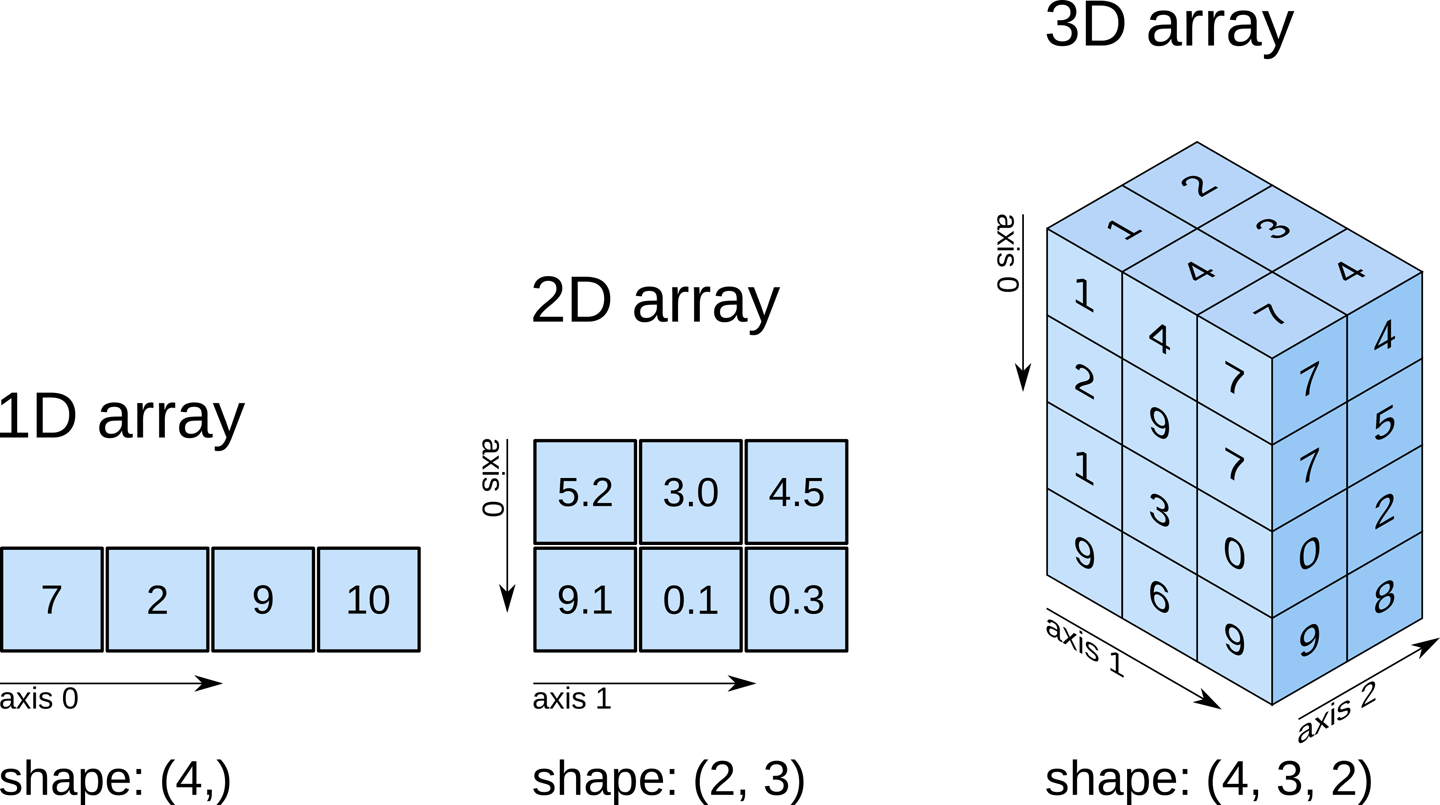

Booleans ( bool) – only True and False values.Signed integers ( int) – integers that can be positive and negative.Unsigned integers ( uint) – integers that are always positive (or zero).There are four broad categories of dtypes: This is not the case for NumPy arrays: all elements of an array must have the same type. Usual Python lists can contain elements of any type. This code produces 11 numbers from 0 till 1: The length of the resulting array – in our case, we want 11 numbers in the array.The last number – we want to finish with 1.The starting number – in our case, we want to start from 0.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed